I Tested 4 AI Workspace Tools. Only 1 Actually Worked.

As a product marketing manager at a SaaS company, I'm professionally required to be aware of new tools. Part of the job. But I'm also professionally trained to be suspicious of launch copy, because I write it. I know exactly what "revolutionizes your workflow" means: it means someone in a marketing seat wrote a sentence that sounded compelling and nobody pushed back.

So when Q1 hit and my Slack started filling up with "you have to try this" messages from people who'd just discovered AI workspace tools, I did something I probably should have done months ago: I set up a proper test.

Four tools. Thirty days. My actual job, not a sandbox demo.

Here's what happened.

The Setup

I picked tools that kept showing up in my feeds and had genuine traction — not stuff with 200 upvotes on Product Hunt, but tools my team's leadership was already forwarding around with subject lines like "Worth exploring?" The categories: a collaboration/meeting assistant, an AI writing tool, a project management AI, and an automation builder. I'm keeping the specific names out of this — my company uses tools in some of these categories and I'd rather talk about the patterns than invite a vendor debate in the comments.

My test framework was simple. I stole it from how I evaluate any new tool at work:

Adoption rate: Was I still using it by week 3, without forcing myself?

Time math: Did it actually save time, or just move the work somewhere else?

Integration friction: Did it slot into what I already use, or did it require a new tab, a new login, a new mental model?

Team lift: Could anyone else on my team use it without a 30-minute onboarding call?

I kept a simple log — just a notes doc I added to every few days. Nothing fancy. A typical entry looked like: "Day 11, meeting tool — edited summary again before sending, took 12 min, would have taken 8 to write from scratch. Starting to wonder." If I forgot to update it for a week, that told me something too.

The Three That Didn't Make It

1. The Meeting Assistant (Abandoned: Day 19)

The pitch: it joins your calls, transcribes everything, pulls out action items, and sends summaries. I was genuinely excited about this one. I average six meetings a week and I'm not always the one driving, which means I spend a lot of post-meeting time reconstructing what I was supposed to do.

The reality: my subjective read was that the transcriptions were maybe 80% accurate on a normal call — which sounds okay until you realize 20% wrong in a meeting transcript is actually a lot. "Launch timeline is Q2" became "launch timeline is cute" in one summary I almost forwarded. I stopped forwarding them after that. (I'm not citing a benchmark here — just what I observed across six weeks of calls with varying audio quality and accents on my team.)

The action item extraction was worse. It pulled out every sentence that had the word "can" or "will" in it, so my summaries were full of fake tasks nobody assigned to anyone. I spent more time editing the summaries than I would have spent just writing them from memory.

By day 19, I was still in the meetings, I was taking my own notes anyway as backup, and then I was cleaning up the AI summaries. Three jobs instead of one.

2. The AI Writing Tool (Abandoned: Day 14)

This one's embarrassing because I should have known better — I work in marketing, I use language for a living, and I still thought maybe an AI docs assistant would help me draft internal briefs faster.

It didn't. Not because the output was bad, exactly, but because editing AI-generated prose takes me longer than writing from scratch. My brain processes "here's a draft, improve it" differently than "here's a blank page, go." The editing mode slows me down. I didn't anticipate that.

Also, our company writes in a very specific voice. Every piece of internal communication has accumulated a set of implicit style choices over four years that nobody has written down, because nobody needed to until we tried to outsource it to a language model. The AI didn't know that we say "customers" not "users," that we never use "leverage" as a verb, that our leadership finds exclamation points in work contexts vaguely unprofessional.

Fourteen days in I just stopped opening it. It was still in my browser tabs. I just stopped clicking on it.

3. The Project Management AI (Abandoned: Day 22)

This one made the most promises — and it's the one I feel most qualified to evaluate, given what I do. The pitch was basically: AI learns your work patterns, prioritizes your task list, surfaces what matters, predicts blockers before they happen.

In practice: it ingested my Asana tasks and then suggested I work on things in an order that made no sense to me. I kept overriding it. It kept recalibrating. After two weeks it was suggesting things in a slightly different wrong order.

The problem isn't the AI. The problem is that prioritization isn't just a logic problem — it's a political, relational, contextual judgment call. My "most important" task isn't the one with the nearest deadline or the most dependencies; it's the one my VP mentioned offhand in a hallway conversation last Thursday. The AI has no visibility into that. It can't.

I dropped it on day 22 when I realized I was maintaining two prioritization systems: the tool's and my own. That's the opposite of productivity.

The One That Stuck

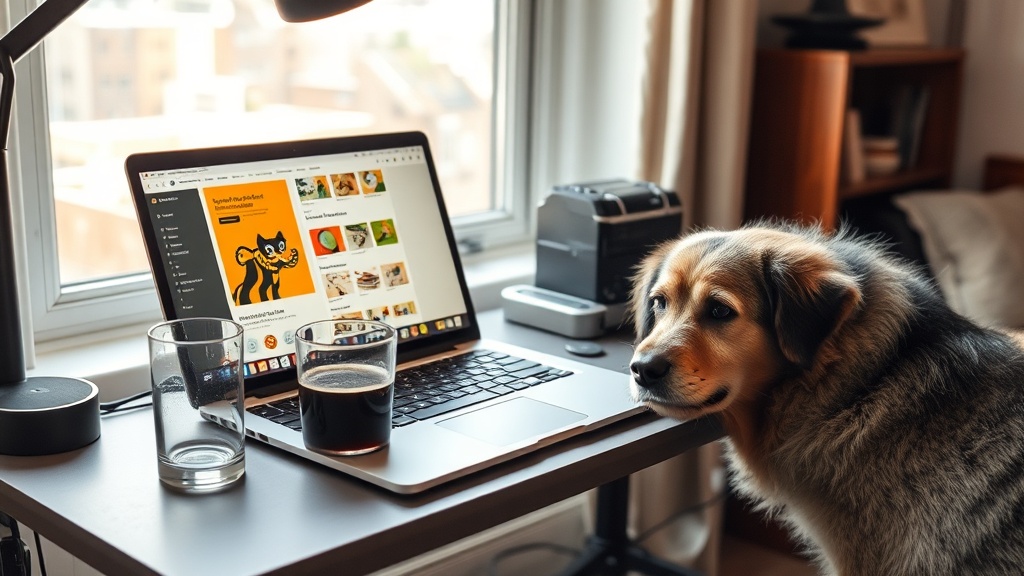

The tool that's still open on my desktop as I write this: a workflow automation builder that connects my existing tools without requiring me to leave them.

I'm being a little vague intentionally — not because I'm hiding something but because the specific tool matters less than what it does. The category is no-code automation: if X happens in Slack, do Y in Notion. If this form gets submitted, create this task and send this message. That kind of thing.

I'd tried tools in this category before and bounced off them. This one hit differently for two reasons:

First, it was genuinely invisible once set up. I built four automations in week one, and then I stopped thinking about the tool. It just ran. The meeting assistant required me to check it. The project management AI required me to consult it. This one just... happened in the background. I didn't interact with it; it interacted on my behalf.

Second, it made my existing tools smarter rather than replacing them. I didn't have to change where I lived — Slack, Notion, Google Calendar. I just connected them in ways that cut out the manual copy-pasting I'd been doing for two years and somehow never fixed.

By week four I'd back-calculated roughly 90 minutes saved per week — based on the specific copy-paste tasks I'd logged in week one and stopped doing. Not a controlled experiment. But it's concrete enough that I can point to it: three recurring handoffs between Slack and Notion that used to be manual, now automated, averaging about 30 minutes each per week. That's real, it's consistent, and it required zero ongoing effort after setup.

What I Got Wrong

I went into this assuming the "best" AI tool would be the one with the most capabilities. I was wrong about that.

The tools I abandoned weren't bad because they had weak AI. Honestly, the AI in the meeting assistant was impressive. The writing tool's output was coherent. The project management AI's interface was beautiful. They failed because they required me to work in a new way to get the benefit — and that friction cost more than the value returned.

The automation tool isn't the most technically impressive thing I tested. It doesn't have a GPT-4 integration or a neural-network prioritization engine or whatever. It just does simple things reliably.

I think there's something worth sitting with here: the AI tools that market themselves the hardest are usually the ones trying to replace your workflow. The ones that actually stick are the ones that serve it. The former requires you to change; the latter changes around you.

That's not a revolutionary insight. But when you're evaluating the next batch of tools your leadership wants you to pilot — and it's Q1, so they're coming — it's a useful filter.

The question isn't "what does this tool do?" It's "what does this tool require from me to do it?"

If the answer is "learn a new thing, maintain a new system, change a habit I've built over three years" — the math probably doesn't work out. Especially not at week 3, when the novelty has worn off and you're staring at a deadline.

Router's looking at me like it's time to stop typing and take him outside, so I'll wrap here.

If you're mid-Q1 and your company is rolling out AI tools right now, my actual advice: run a 3-week test, not a 30-day one. Week 3 is when the real answer shows up. Any tool that's still in your workflow at day 21 without you forcing it is worth keeping. Everything else is product marketing.

Which, again — I would know.

Testing something in your own workflow? I'd genuinely like to hear what's working. Drop it in the comments.